The MIMIC Simulator performance testing methodology attempts to overcome

common problems with published performance benchmarks, specially in the

common problems with published performance benchmarks, specially in the

IoT arena. In this article we examine one recently published report and discuss

how to make it better.

The main problem with any performance test is that the results apply only to the

specific test scenario. If the test scenario is carefully selected, the results will be

relevant for a wide variety of situations. If the test report is good, then the exact

methodology is documented, so you can evaluate it, and determine whether the

results can be useful for you. For example this report

performed one test scenario for an uncommon situation of a small set (3) of high-

frequency publishers, and 15 mosquitto_sub subscribers. Plain text MQTT is only

used in trivial situations, and there is no indication that TLS transport is measured.

Latency measurements suffer from the time synchronization problem on different

systems.

Specifically, it says right at the beginning in the abstract

"The evaluation of the brokers is performed by a realistic test scenario"

but then, in section 4.1.1. Evaluation Conditions:

"

Number of topics: 3(via 3 publisher threads)

Number of publishers: 3

Number of subscribers: 15 (subscribing to all 3 topics)

Payload: 64 bytes

Topic names used to publish large

number of messages: ‘topic/0’, ‘topic/1’, ‘topic/2’

Topic used to calculate latency: ‘topic/latency

"

so rather than testing a large-scale environment, a small set (3) of high-frequency

publishers, and 15 mosquitto_sub subscribers was used. In our experience, no

recent broker has any problem with less than 1000 publishers.

Second, in section 4. the subscriber back-end is detailed:

"The subscriber machine used the “mosquitto_sub” command line

subscribers, which is an MQTT client for sub- scribing to topics and

printing the received messages. During this empirical evaluation, the

“mosquitto_sub” output was redirected to the null device (/dev/null) "

using a the simple mosquitto_sub client which is single threaded. In addition,

the subscribers subscribe to all topics, probably the wildcard topic #. So, out of

many code paths in the broker, the least commonly used is tested. If your

application uses a topic hierarchy, with different subscribers subscribing to

different topic trees, then topic matching performance needs to be exercised.

Third, while QOS 0, 1 and 2 seem to be tested, only a single payload size

was used, and there is no indication that TLS transport is measured.

Fourth, they attempt to measure latency correctly, ie. section 4.1.2

"Latency is defined as the time taken by a system to transmit a message

from a publisher to a subscriber"

but their methodology is flawed, since it is almost impossible to synchronize the

clocks on 2 separate systems to millisecond accuracy and in table 6 the latencies

are in the 1ms range. So, the measurements rely on unknown synchronization.

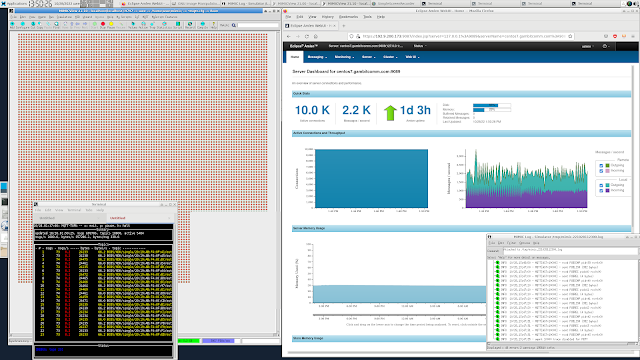

For an example of the MIMIC latency testing methodology see this blog post.

No comments:

Post a Comment